Natural Language Processing (NLP) is a field of computer science, artificial intelligence, and computational linguistics concerned with the interactions between computers and human (natural) languages. As such, NLP is related to the area of human–computer interaction. (Wikipedia)

History of NLP

NLP grew out of the activity of the ‘behavioural modelling‘ activity of Frank Pucelik, John Grinder and Richard Bandler in the study of Satir, Perls, and Erickson. (nlp-now.co.uk) Back then, Bandler was a psychology student and Grinder was an associate professor at the University of California. The early stage of NLP studies started with Brandler and Pucelik, and they were later joined by Grinder. The first NLP study was through the analyzing of writings and tape-recordings to thoroughly analyze the work and the success-rate of Virginia Satir and Fritz Perls.

Development of NLP

Early computational approaches to language research focused on automating the analysis of the linguistic structure of language and developing basic technologies such as machine translation, speech recognition, and speech synthesis. Today’s researchers refine and make use of such tools in real-world applications, creating spoken dialogue systems and speech-to-speech translation engines, mining social media for information about health or finance, and identifying sentiment and emotion toward products and services. (Advance in natural language processing, Vol. 349 no. 6245 pp. 261-266, 2015)

Applications of Natural Language Processing

- As an enhancement for grammar checking software, writing platform, or keyword research tool such as Twinword Ideas.

- A better human-computer interface that could convert from a natural language into a computer language and vice versa. A natural language system could be the interface to a database system, such as for a travel agent to use in making reservations. A visually impaired person could use a natural language system (with speech recognition) to interact with computers. (Introduction to natural language processing, CCSI)

- As an add-on for language translation program that could translate from one human language to another (say for instance, English to Italian). Natural language processing will allow for the rudimentary translation, before the involvement of a human translator. This would cut down the time needed for translating documents.

- A computer that could understand and process human language, enabling it to convert mass information either from Ebooks or websites, into structured data, before stocking them into a huge database.

Twinword’s NLP Technology Different?

Twinword’s patented NLP technology is based on compiling a massive database that understands and extracts true knowledge from websites and information repositories in a way that mimics natural human thought. The machine learning technology gets smarter by having people around the world to contribute to the database in a real life setting, making it possible to analyze trends and patterns per region!

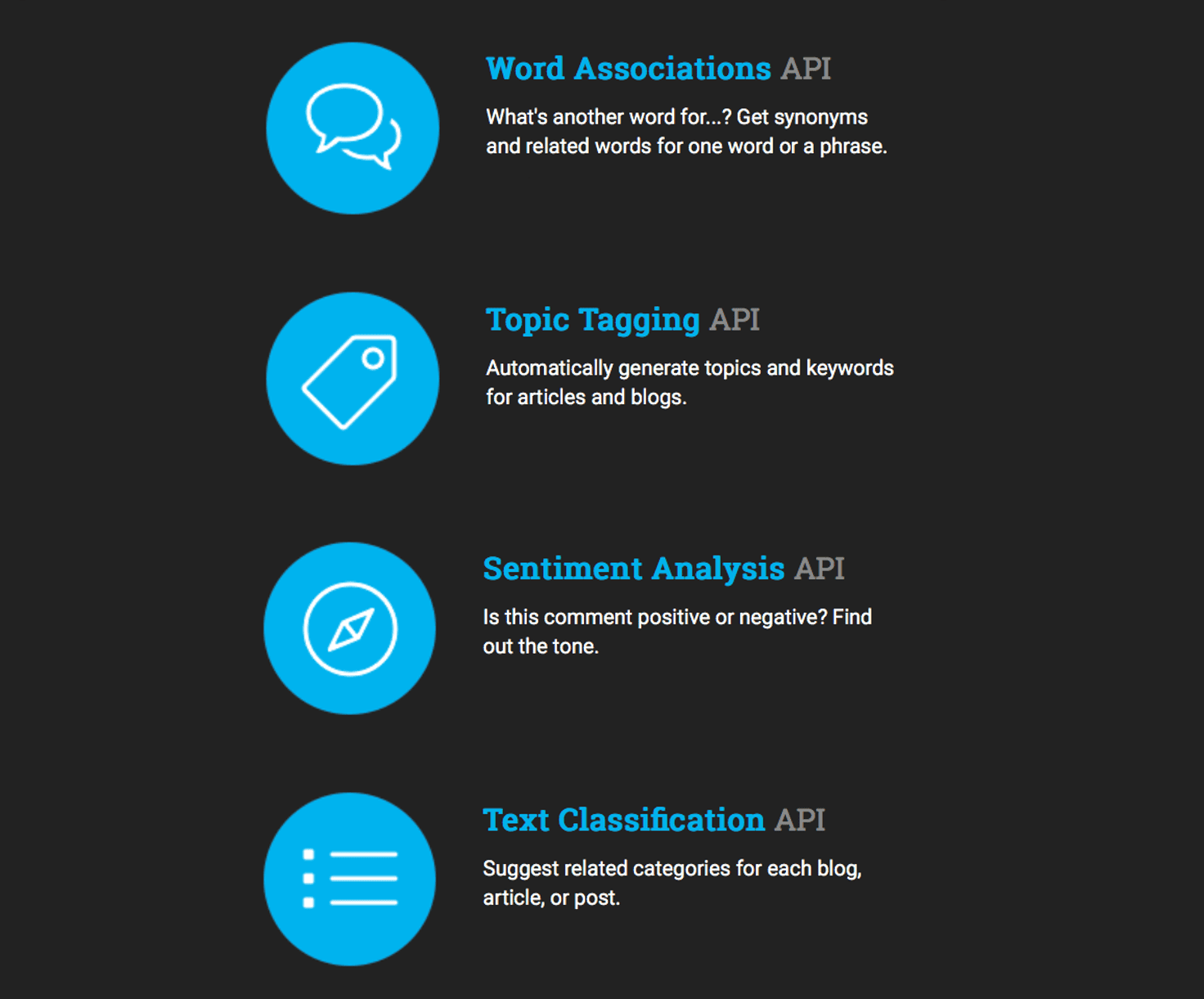

Twinword provides text analysis APIs that can understand and associate words in the same way as humans do. Our APIs are currently being used by search engines, online e-Commerce sites, and many other developers creating software that analysis and categorize text. Beyond that, we also have a few consumer products related to writing, searching, and learning that uses our APIs and showcases its power.

See yourself how you can apply NLP technologies.

1 Comment

Inspirational Blog The Content Mentioned is Knowledgeable and Very Effective .Thanks For Sharing It